A new study shows that about half of Kepler’s giant exoplanet candidates aren’t real planets. Astronomers expected this.

Haven Giguere / Nikku Madhusudhan

In the midst of a slew of exoplanet results from the Extreme Solar Systems III conference in Hawai‘i last week, one study garnered a lot of attention following a press release headline that read: “Half of Kepler’s Giant Exoplanet Candidates are False Positives.”

The study at the heart of the hubbub was a follow-up on potential giant planets detected by NASA’s Kepler satellite, which over four years found exoplanet candidates by the thousands. It turns out that a large number — roughly 55% of Kepler’s list of giants, the study authors say — might not be planets after all.

That might sound shocking. But it’s not: these results were largely expected.

The Study

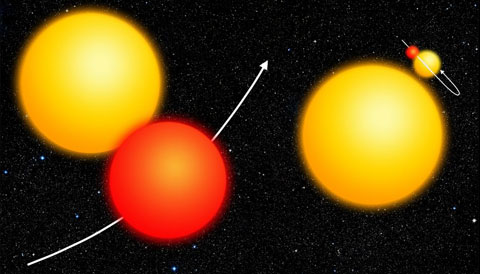

NASA / Ames Research Center

Alexandre Santerne (University of Porto, Portugal, and Aix Marseille University, France) and colleagues followed up on Kepler’s long list of planet candidates during a five-year observing campaign with the 1.93-meter telescope at the Observatory of Haute-Provence in France. Kepler detected planets by their transits, the slight dimming of a star’s light they cause when they pass in front of it. Using the SOPHIE spectrograph, Santerne’s team sought to confirm these starlight dips’ planetary status by measuring the worlds’ tiny gravitational tugs on their stars. The instrument could detect radial-velocity wiggles as small as 2 meters per second.

Small planets cause too weak a wiggle for SOPHIE to discern, so the team could only follow up on giants. Rather than choosing targets based on a candidate’s radius, whose measurement can be uncertain, the team chose targets by their transit depth: the larger the planet, the more light it will block. They also chose only those planets with orbits taking less than 400 days.

In the end, Santerne and colleagues spent 370 nights between July 2010 and July 2015 observing 129 of Kepler’s more than 4,000 planet candidates.

Of these, only 45 turned out to be bona fide planets. The rest were brown dwarfs (3) or multiple-star systems (63, 48 of which were eclipsing binaries); for an additional 18 cases the team could reject both these alternatives but still couldn’t confirm the planet. Even if all of those 18 cases turn out to be planets, 51% of Kepler’s giant potential planets would still turn out not to be real.

That’s a lot higher than what previous studies have found. Most recently, Francois Fressin (Harvard University) and colleagues found a 20% false-positive rate for giant planets.

A higher “fake” rate could have implications not just on the tally but on our understanding of these worlds. For example, once they removed false positives from the group, Santerne’s team saw three distinct populations of giant planets: hot Jupiters that orbit their star in a few days, temperate giants more like those in our own solar system, and a third type with orbits in between. Other studies based on the Kepler candidate list haven’t recognized these separate populations because their samples were too contaminated, Santerne’s team argues.

No Surprise

Santerne’s work is important: it’s the largest radial-velocity study of Kepler planet candidates conducted so far. But is the high false-positive rate really surprising? Not at all, says Tim Morton (Princeton University), an expert on Kepler data.

“The reason this apparent false-positive rate from this study is so high is mostly because the Kepler team has been very generous with the last few data releases with what has been called a "candidate’,” Morton says.

In the early years, explains Kepler mission scientist Natalie Batalha (NASA Ames), the Kepler team automatically marked any potential planet with more than twice Jupiter's radius as a false positive. But Kepler couldn't always measure planet sizes with a high degree of accuracy. Moreover, the team worried that they were throwing away objects in the divide between planets and the failed stars known as brown dwarfs.

So the Kepler team stopped throwing out planet candidates based on size alone, which increased the number of "fakes", but now enables scientists to study the occurrence rate of brown dwarfs and compare it against that of giant planets.

So Batalha sums up the study differently than the news headlines did. Santerne's team found that warm Jupiters, Jupiter-size giant planets no farther from their parent star than Earth from the Sun, are 15 times more common than brown dwarfs in similar orbits, she says. "THAT is the real news!"

Morton, in turn, is in the process of calculating the chance that each planet would turn out not to be real for every potential planet in Kepler’s list. His results are due out soon. But he has already compared his calculations against Santerne’s observations and found they match up. Researchers will use Morton's calculations and Santerne's follow-up observations to decide which potential planets to include in future studies of, for example, giant planet populations.

Morton also stresses that the Santerne results are specific to giant planet candidates — all indications are that the false-positive rate for smaller candidate planets is still low.

* Updated with additional comments from Natalie Batalha on December 11, 2015.

6

6

Comments

Richard-Lighthill

December 10, 2015 at 8:03 pm

While, as this article says, false positives even a high percentage of them) was "expected," I don't ever remember hearing or reading of this "expectation." Perhaps it is the media's fault, perhaps it is the "lay astronomer's" fault (aka "wishful thinking") or perhaps it is the scientist's fault (anything for more money from the government or educational institutions) for "getting our hopes up." Whoever the "fault" lies with, the fact is that there is too much hype in the scientific community and little patience to "see where the data leads" us. Count the number of retractions from over-zealous reporters and scientists and the major players (NASA and ESA) in the past year and you will begin to wonder "What do we REALLY know?" Let's stop being "generous" with our data and instead be far more cautious.

You must be logged in to post a comment.

Tom Hoffelder

December 11, 2015 at 10:10 am

Excellent comment Richard! It seems like about 50% of all "scientific" findings these days are soon found to be wrong! Many defend that by saying it shows science is working. I say it is bad publicity for science, which perhaps is the reason so many people, in this country anyway, believe in anything and everything but science.

You must be logged in to post a comment.

Kevin

December 11, 2015 at 11:23 pm

Your figure of 50% sounds rather high and I'd like to see some empirical data that supports your conclusion but yes, the self-correcting nature of science is what leads to the truth. Unlike religion, science questions itself all the time and refuses to settle for half truths or "That's close enough." Good science welcomes scrutiny whereas religion runs from it. Deus ex machina is a luxury that science can't afford. I've focused on religion because it seems to be recognized as science's only "competitor."

You must be logged in to post a comment.

Margarita

December 12, 2015 at 3:49 am

Do you think you ought to let the publishers of the Very Short Introduction series know that religion is a recognised competitor to science? They have, presumably inadvertently, got an Anglican priest to write the VSI to Quantum Theory.

http://www.amazon.com/Quantum-Theory-Very-Short-Introduction/dp/0192802526/ref=sr_1_1?s=books&ie=UTF8&qid=1449906182&sr=1-1

You must be logged in to post a comment.

Monica YoungPost Author

December 12, 2015 at 10:04 am

Dear Richard, After you posted your comment, I received commentary from Natalie Batalha that explains further what's meant by "generous". In my personal opinion, I don't think there is blame to be laid here: scientists did what they're supposed to do - they collected data, analyzed it, collected follow-up observations, analyzed further, and so on in the ongoing process of science. The media did what they're supposed to do, reporting information as it becomes available. (Sure, I suppose we could wait for developments, but that could mean waiting a long time and missing out on readers in the meantime. As it is, I waited a week to post this story so that I could include comments from experts outside the study.) And readers did what they're supposed to do, getting excited about exciting results. If anything, I hope that stories like this one serve to portray science not as a collection of facts but as a method.

You must be logged in to post a comment.

Kevin

December 11, 2015 at 11:07 pm

Oh well, as Ponce de Leon said when he failed to find the Fountain of Youth, "Dang it! Crap! That's it, I'm going home."

You must be logged in to post a comment.

You must be logged in to post a comment.