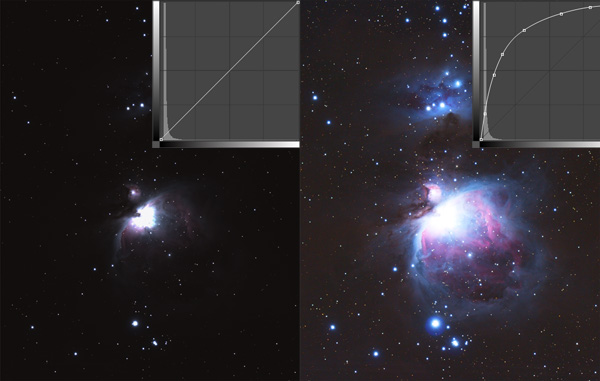

When shooting with digital cameras, images will appear very dark and low-contrast. This is true with daytime photographs as well as long-exposure deep-sky images. That’s because raw data is linear. To make details visible, we must apply a non-linear transformation and s – t – r – e – t – c – h the data.

Digital cameras work by turning photons into electrons, measuring the voltage created, and then turning that measurement into a number that represents the number of photons recorded by each pixel on a detector. Of course, it’s considerably more complicated than that, but it’s essentially what a digital camera does – it turns photon counts into numbers — and these numbers get converted back into light intensity when the image is displayed. Indeed, that is where digital cameras get their name – digital simply means numbers. Fewer photons are recorded in dark areas in an image, while bright areas (such as the core of stars) are the result of an abundance of photons recorded.

Digital cameras record and measure photons in a linear manner. That means that when one photon hits a pixel in the sensor, it releases one electron, which is then converted to a count of one. When two photons are recorded, you get a count of two, and so on. Every time you double the number of photons, you double the electron count.

This linearity is what makes digital cameras so powerful. Linear data allows you to add or average many individual exposures together to improve the resulting image’s signal-to-noise ratio, which can then be stretched more to reveal fainter objects.

While linear data is excellent for producing scientific measurements, it presents a problem when using digital cameras to produce pictures of the night sky. Human vision, and indeed all our senses, do not function in a linear manner. We experience the world in a way that more closely approximates a logarithmic curve.

For example, you can easily distinguish a one-pound difference in weight when the total weight is 2 pounds. But you can’t easily tell the difference between 100 and 101 lbs., even though the difference in both cases is one pound. Our vision can more easily distinguish differences in lower levels of brightness compared to high levels of brightness. This is why noise is easy to see in the shadow areas of an image and why it’s so irritating.

Digital Development

To turn the camera’s linear data into something that approximate our visual experience, we must apply a non-linear transformation. This is often referred to as the "digital development process" (DDP), coined by Dr. Kunihiko Okano who invented it. DDP compresses the dynamic range of a linearly-recorded image, brightening and increasing the contrast in darker areas of the image as compared to the brighter areas within the same picture.

DSLR cameras have a computer built into them that applies a generic non-linear curve when shooting in JPEG mode. RAW-format images have these changes embedded in the metadata of the RAW file and apply them when they are opened in an image-processing program that comes with your DSLR camera (as well as Adobe Light Room and Photoshop). The nice thing about RAW images, and one of the best reasons to shoot RAW format, is you can adjust all parameters of this DDP process when you open the image. JPEG images, on the other hand, have these changes permanently applied, making it difficult to significantly adjust them later.

Astronomical image-processing programs often include several non-linear processes including DDP, ArcSinH, logarithmic scale, and log (square root) among others. It’s the linear nature of digital sensors that make them so powerful and the digital development process that turns linear images into pretty pictures by revealing details that are initially hidden in the dark, low-contrast original data.

1

1

Comments

bwana

June 4, 2017 at 11:26 pm

A nice summary! Great for my outreach programs.

Thanks

You must be logged in to post a comment.

You must be logged in to post a comment.