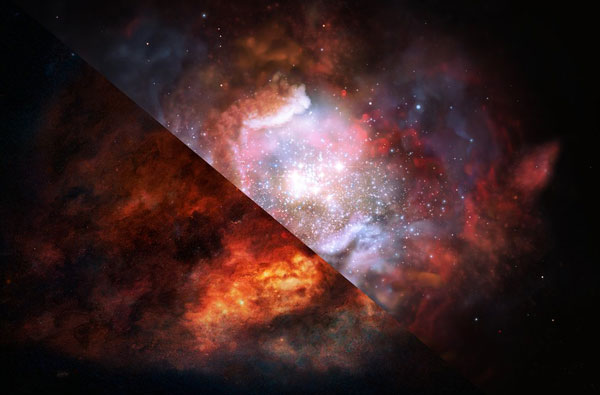

A new method of measuring star formation in the earliest galaxies finds that they’re producing more massive stars than expected — a result that could affect our understanding of how galaxies grow their stars.

A few months ago, Sky & Telescope reported on a study of a nearby star-forming region (30 Doradus) forming an unexpected number of massive stars. The region might even contain stars with up to 300 times the mass of the Sun — but that wasn’t the real surprise.

Astronomers had thought that the same basic processes ought to shape star formation no matter where it happens, resulting in the same relative numbers of stars everywhere. If that turned out not to be true — and 30 Doradus seemed to be proving the exception — then astronomers would have to rethink everything, from how they classify galaxies to how quickly the universe formed its stars.

NASA / ESA / D. Lennon / E. Sabbi (STSCI) et al.

But it’s difficult to reach such conclusions on the basis of a single star-forming region, no matter how well it has been studied. Now, new work appearing in Nature appears to confirm that star formation depends not on fundamental processes but on environment. Astronomers may have to do some rethinking after all.

Four Distant Starbursts

The focus in this study turns to four galaxies whose light took more than 10 billion years to travel to Earth. These galaxies are bursting with stars, but they’re also dusty, which makes them immune to methods requiring ultraviolet, visible, or infrared light. Instead, Zhi-Yu Zhang (University of Edinburgh, UK, and European Southern Observatory, Germany) and colleagues trained the Atacama Millimeter/submillimeter Array (ALMA) on these galaxies, searching for emission related to carbon monoxide, a signal tied to a galaxy’s history of star formation.

ALMA’s incredible resolving power received a helping hand in the form of gravitational lenses: foreground galaxies aligned just so. Their gravity acted as a cosmic lens to magnify the light from these distant starbursts.

ALMA (ESO / NAOJ / NRAO) / Zhang et al.

The scientists measured two isotopologs of carbon monoxide: 13CO and C18O. 13C, (which contains one more neutron than ordinary carbon atoms) is released by stars of all masses, whereas 18O (which contains two extra neutrons compared to ordinary oxygen atoms) is released by only by more massive stars. Since more massive stars live brief lives, measuring the abundance of 13CO and C18O serves as a fossil record of how many massive stars formed relative to low-mass stars.

The signature is immune to what the study authors describe as “pernicious” effects of dust. But the authors also acknowledge that the measurement is a roundabout way of getting at the problem.

That’s because they’re observing carbon monoxide molecules that are floating in the gas between the stars, rather than measuring radiation from stars themselves. So they’re in effect probing the galaxy’s entire history of star formation. Granted, galaxies in the early universe have a shorter history and thus a shorter amount of time for confounding effects to complicate the measurements, but as Kevin Covey (Western Washington University) points out, it’s still possible.

ESO/M. Kornmesser

Redefining Cosmic Noon

If the measurements hold up, then what we dub “starburst” galaxies in the early universe aren’t actually making as many stars as we thought. Most stars have less than the Sun’s mass, but these young galaxies appear to be pouring more of their energy into making more massive stars. That means that other methods of estimating star formation rates might be wrong. In fact, our entire understanding of the cosmic star formation — which astronomers currently think peaked when the universe was roughly 4 billion years old — might be wrong.

Profound implications aside, Zhang’s team has a ways to go when it comes to convincing all of their colleagues. But they’re only getting started: Zhang says they’re already preparing more systematic surveys that will include nearby galaxies and multiple tracers of star formation.

3

3

Comments

Roger Venable

June 13, 2018 at 10:23 am

It seems to me that this study is inherently subject to biased results due to the effect of stellar life cycles.

Specifically, if you assume that massive stars form at a constant rate over billions of years, but each massive star has a brief life, then the rate of addition of oxygen-18 to the interstellar medium is constant over billions of years. Not so with dwarf stars. If you assume that dwarf stars form at a constant rate over billions of years, and each dwarf star can be expected to live a trillion years, then the rate of addition of carbon-13 to the interstellar medium increases nearly linearly with time, as every dwarf star that ever formed is still adding carbon-13 to the ISM.

This means that, as one looks back into the early universe by examining very distant galaxies as these researchers did, you will find a very different carbon-13 to oxygen-18 ratio from what you find in nearby galaxies. The effect is to fool you into thinking that, billions of years ago, the ratio of the formation rate massive stars to that of dwarf stars was greater then than it is today.

Did the researchers discuss this effect, and if so, how did they accommodate it in their calculations?

Thanks for considering this objection.

-- Roger V.

You must be logged in to post a comment.

Zhi-Yu Zhang

June 19, 2018 at 9:41 am

Hi Roger V.,

Thanks for the excellent question. We did consider this effect. To do so, we applied a detailed galactic chemical evolution model to properly account for the stellar yields (elements expelled from stars) for different stellar masses, metallicities, and their corresponding lifetimes.

In particular, we note that the 18o return rate from massive stars is not constant in time. The yields of 18O, which is a secondary element, are in fact highly dependent on the metallicity of the parent stars, and they are increasing with time during the evolution of the galaxy.

Such model has been benchmarked against the milky way data, and it can reproduce the abundance ratios found in both stars and interstellar medium in our milky way, and it can reproduce the Galactocentric gradient of the abundance ratios. So, we can ensure that the time-delay effect is properly treated.

I hope our reply answers your questions/comments. Please let me know if you have more concerns about our work.

Best wishes,

Zhi-Yu Zhang

You must be logged in to post a comment.

Peter Wilson

June 20, 2018 at 11:58 am

In layman's terms: there were too many massive stars in universe’s youngest galaxies. So many, in fact, that they changed the conditions which caused the over-production of massive stars. Problem solved...

You must be logged in to post a comment.

You must be logged in to post a comment.